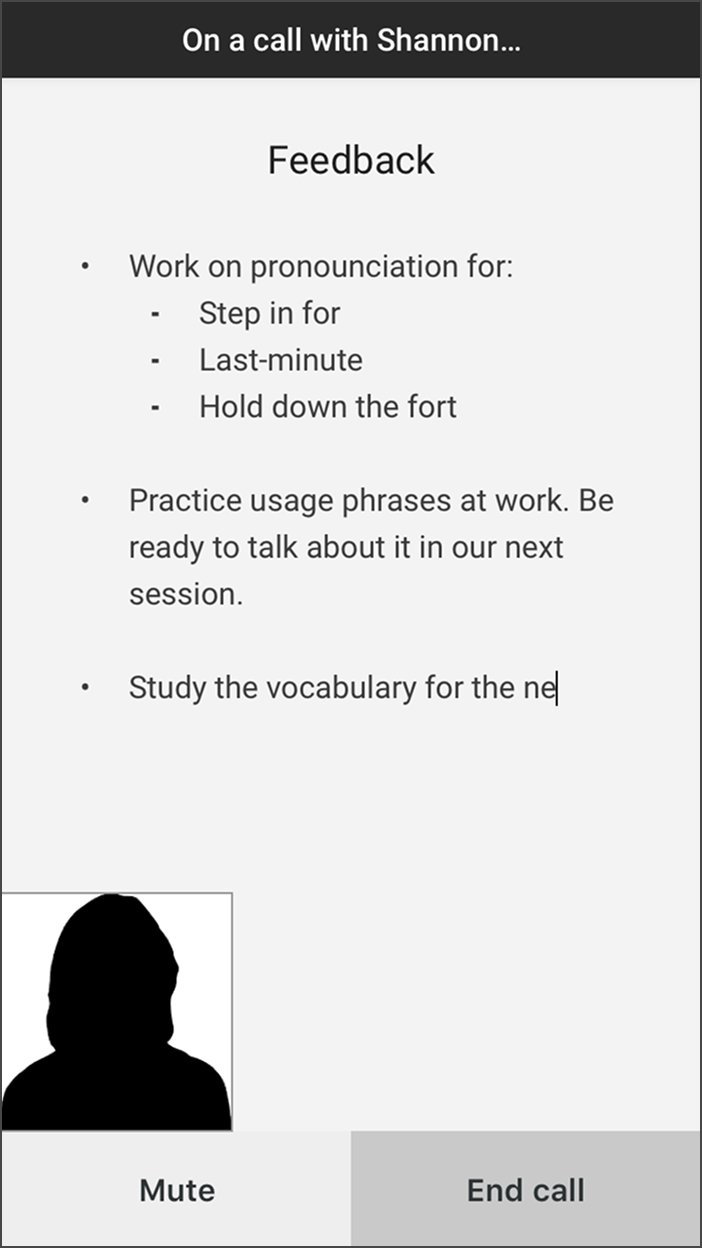

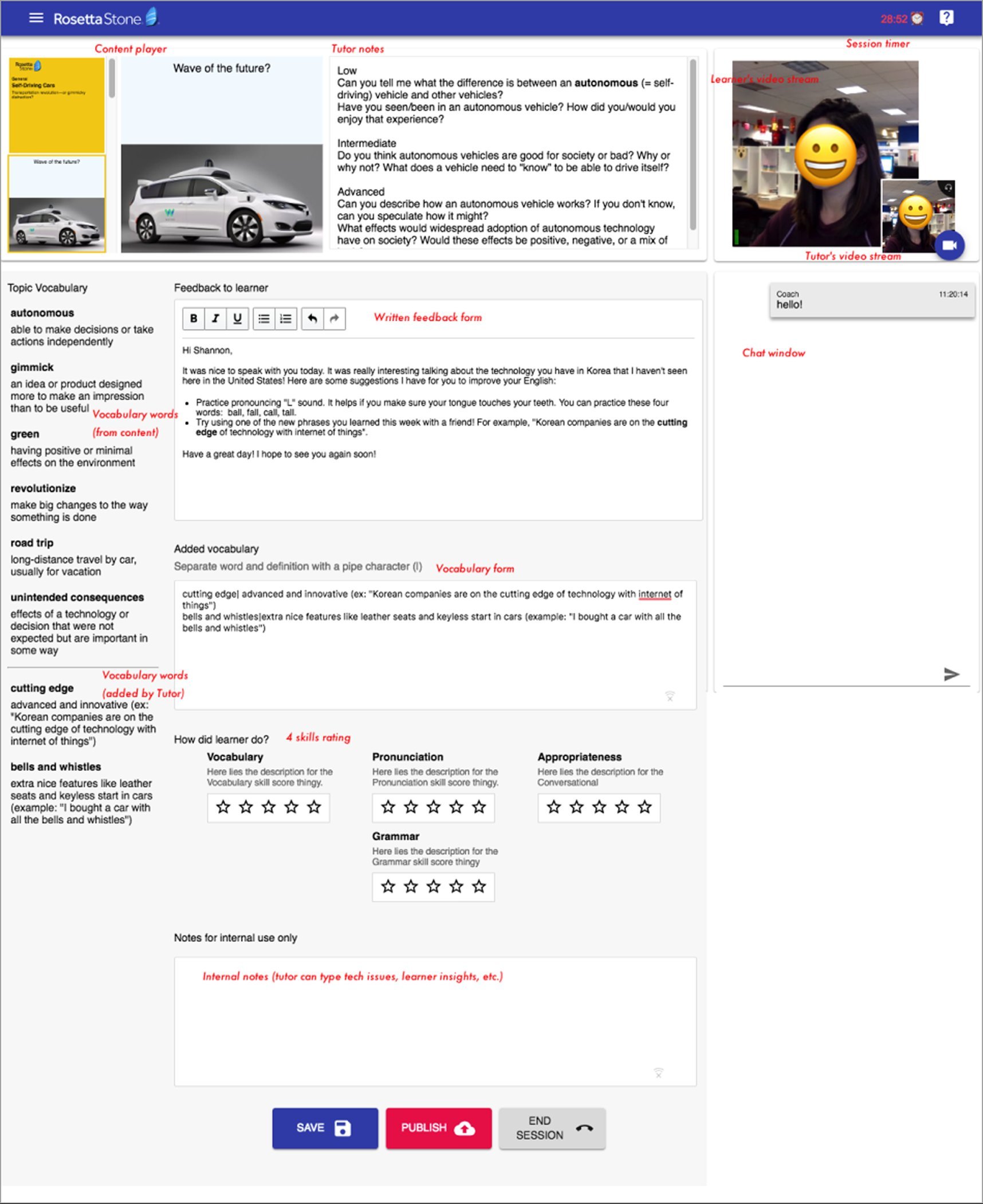

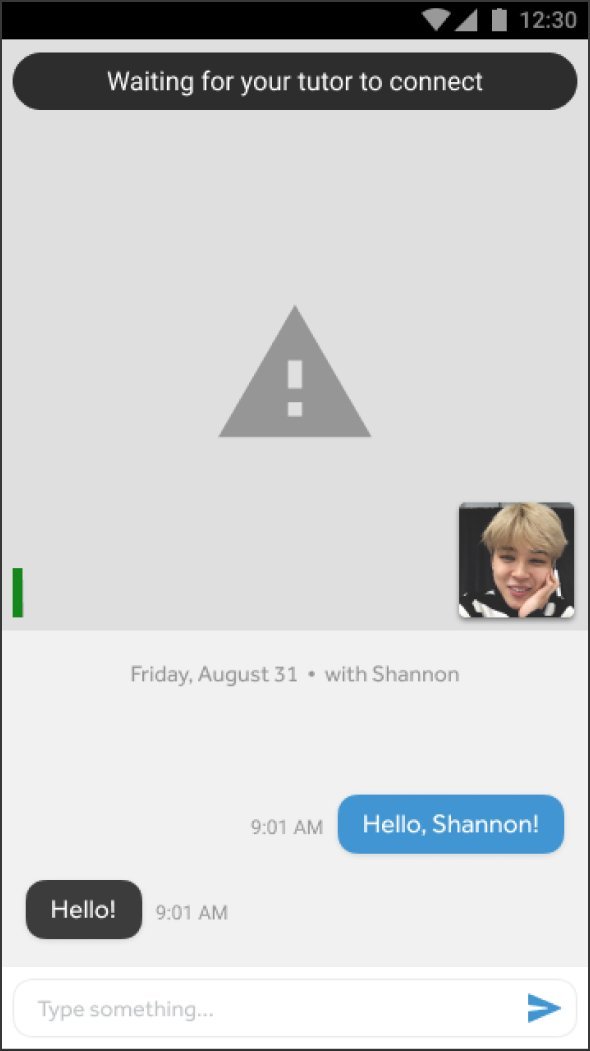

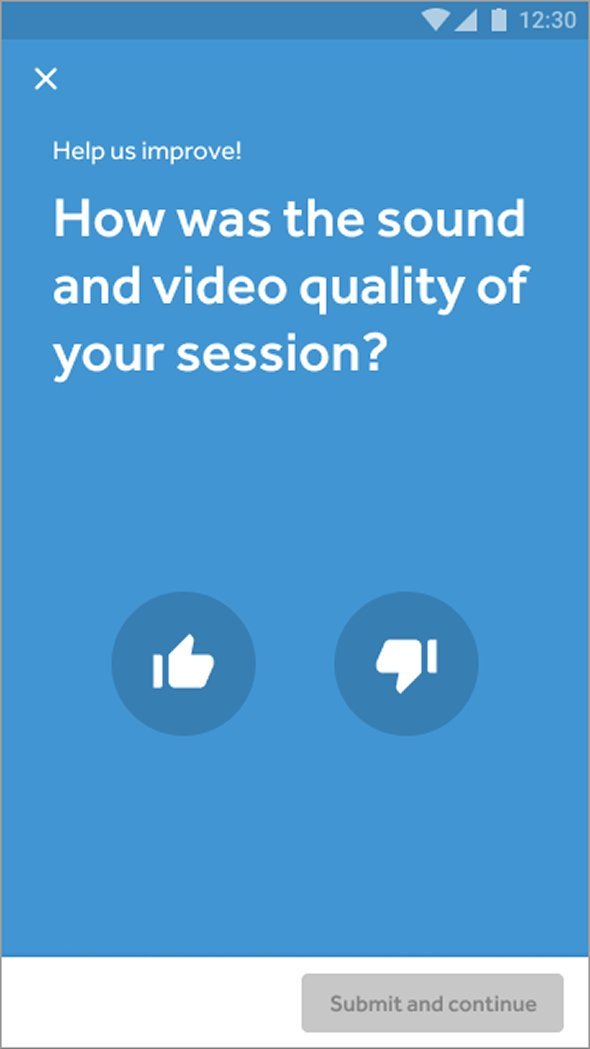

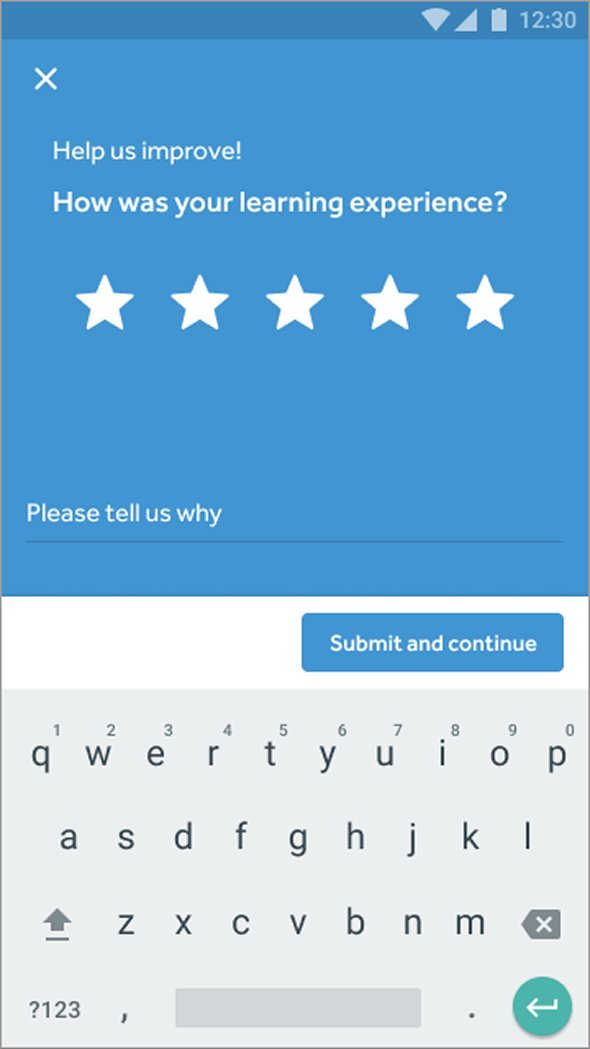

The feedback feature only worked because the tutor portal made it possible in five minutes. That portal was its own design problem.

Tutors had to teach, maintain eye contact with the camera, navigate slides, manage chat, add vocabulary, and rate learner performance across multiple competencies, all at once.

One tutor said:

"We only have 5 minutes between sessions, so it's stressful."

Tutor interview

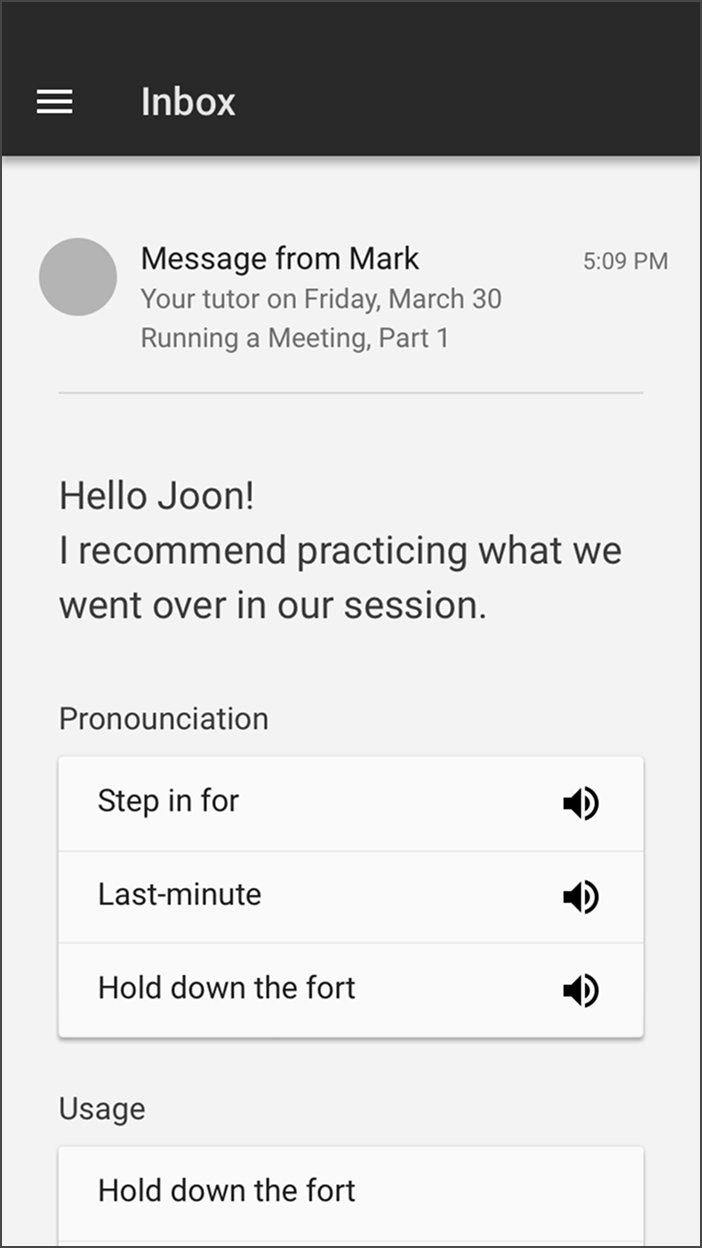

Tutors had five minutes between sessions to leave feedback, wrap up notes, and prep for the next learner. That constraint shaped two specific design moves:

- The vocabulary section uses a keyboard shortcut: type a word, hit

|, type the definition, and the output formats automatically with the term in bold.

- An internal notes section, visible only to tutors, lets one tutor hand off context to the next so the first five minutes of any session aren't burned on introductions.